But Can It Run Crysis?

The ultimate question when evaluating the performance of a computer

It’s finally finished; your perfect gaming rig. Top-of-the-line CPU, GPU, and everything in between; there’s no graphical challenge that you cannot overcome. That is, except for Crysis. The rhetorical question (even recognized by the dictionary) that all computer enthusiasts must be able to answer is the one: But can it run Crysis?

You boot up the game, max out the settings, and find that you can barely keep the framerate over 60. What’s the deal?

A Look Into the Past

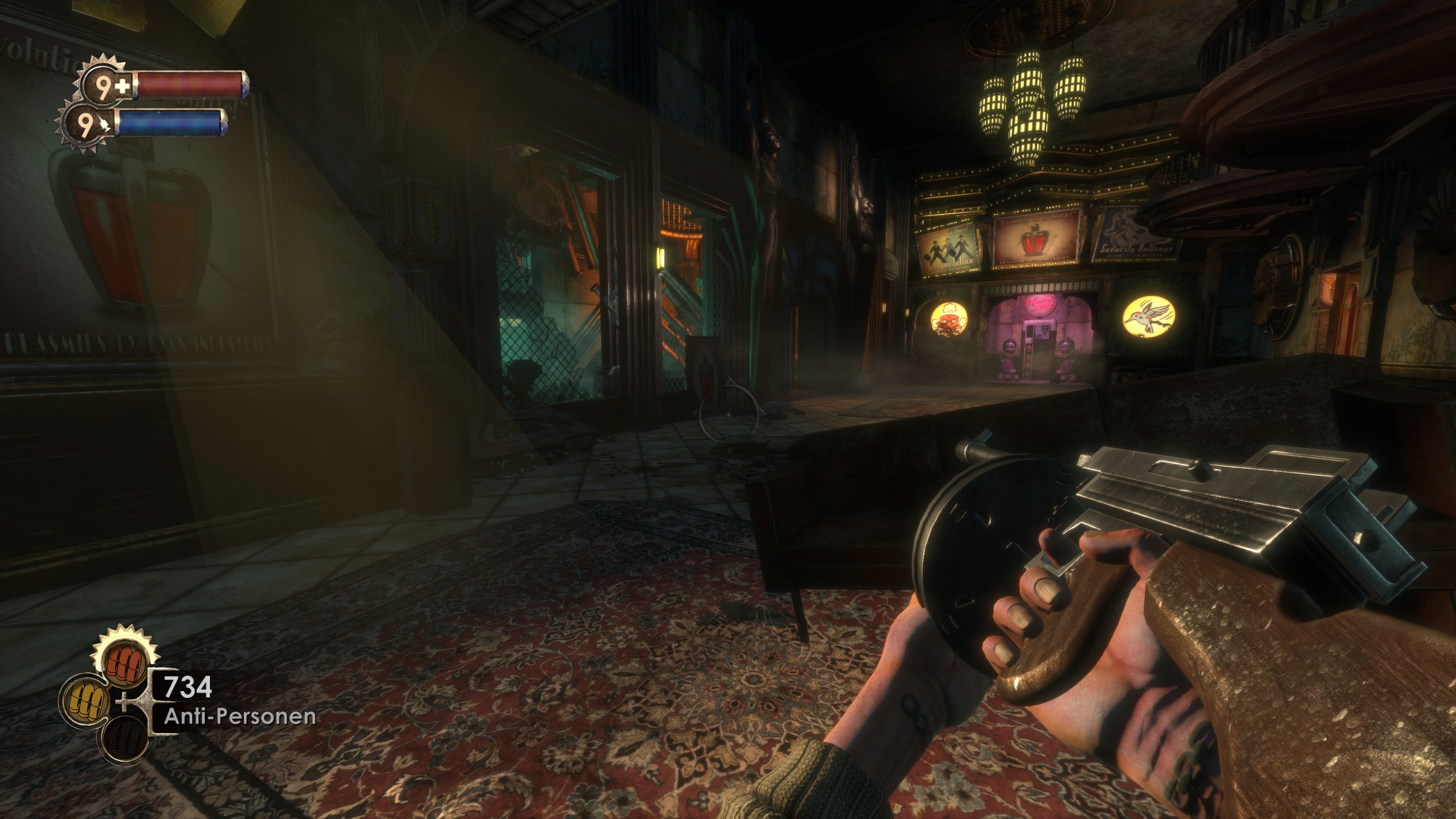

Before answering the question, we must first understand the gaming industry back when Crysis was released in 2007. PC gaming took off during that period, with classics such as The Orange Box, Elder Scrolls IV: Oblivion, and BioShock released to hungry gamers. Yet, even within these giants, Crysis would stand out from the rest: it somehow managed to look generations better than its peers.

(Top-left) BioShock. Source: Steam Community (Top-right) Team Fortress 2 (Part of The Orange Box). Source: Youtube (Bottom-left) The Elder Scrolls IV: Oblivion. Source: Youtube (Bottom-right) Crysis. Source: Reddit

From a glance, it’s clear that Crysis looks substantially better. From the lighting that hits the foliage in the environment, the reflections in the water, and the almost gradient-like colouring in the sky, Crysis blew its competitors out of the water. And it wasn’t just static screenshots that looked great; even in motion felt like something out of the future at the time.

Why was this the case? Every game is built on top of a game engine, a collection of tools that define how the screen is rendered (graphics), objects interact (physics), characters behave (AI), and more. For the visual aspect, many engines use tools such as OpenGL or DirectX for the actual rendering logic.

Each of these games above was built on different engines: The Orange Box on the Source Engine, BioShock on the Unreal Engine, Elder Scrolls IV: Oblivion on the Gamebryo, and Crysis on the CryEngine. This is why games that run on the same engines tend to feel and play very similarly (I’m looking at you, Elder Scroll/Fallout/Starfield). So if the game engine dictates how the game will eventually look, what did the Crytek engine do that the others didn’t?

To be honest, everything.

As mentioned above, Crysis was developed on CryEngine, the second iteration to be exact, called CryEngine 2. Looking at the SIGGRAPH 2007 paper that described what the team set out to do, it’s a pretty impressive set of features they wanted the engine to have considering the time:

- Cinematographic quality rendering

- Dynamic lighting and shadowing

- HDR rendering

- Support for multi-CPU and GPU systems

- Dynamic and destructible environments

Not only were these features that competitors did not readily provide, but some of these also were way ahead of their time, with features such as parallax occlusion mapping (POM) not being found in other engines until the next generation of consoles. POM is being updated into games as recent as Farming Simulator 22! Let’s look into how the Crytek team created this behemoth of an engine.

Source: YouTube.

No Limits (Kind Of)

The main limitation many developers had and still have today is the audience and breadth of hardware to which they decide to cater their game. The larger the range, the more sacrifices they have to make. Developers tend to work for the lowest common denominator when developing, so the games run at their standard, no matter the hardware. This was the most important principle that the Crytek team had when working on the second version of the engine.

The SIGGRAPH paper mentions that the most significant learning from the first iteration of the engine is that “In Far Cry [the game that ran on CryEngine 1], we supported graphics hardware down to NVIDIA GeForce 2…We decided to reduce the number of requirements for a cleaner engine.”

The team went into the newer iteration with the intent of building an engine (and eventually, a game) that ran on the best hardware possible. They wanted to push the limits of when they could do graphically and didn’t let slower hardware slow them down. This was apparent in the hardware that they supported with Crysis, as they built it only for PC. Introducing consoles meant they would have to make the game run on up to three different hardware configurations, compromising the visual fidelity. Scoping the project down to a smaller audience meant they could squeeze as much out of the gamers' hardware that met their criteria. The fact that they introduced limits to their potential audience meant they were freer to create the best engine they could.

And that’s precisely what they did. Crytek 2 and the resulting Crysis had some absurd amount of detail that was put into it. Many games make compromises that don’t affect the primary gameplay experience, but the Crytek team spared no expense. Without going too deep into the graphical details, some high-level things that the game did:

- Implement the entire sky with a light-scattering equation instead of a skybox (which is what most games did), which meant realistic sky lighting, and the ability to support day-night cycles

- Drawing out each leaf on plants, instead of using textures

- Making almost all objects in the game an interactive physics object

This made running the game taxing for both the CPU and GPU for a given PC due to the split computation.

Source: YouTube.

Bounded by GPU or CPU?

Before we dig deeper, we must understand the CPU and GPU's purpose in running a game. The CPU is the processing chip (usually made by Intel or AMD) that deals with computation. In contrast, the GPU (traditionally made by NVIDIA or AMD) is responsible for graphical computation. Both need to be working together to be able to render the frames for a game. If one is slower than the other, it is what is “bounding” the rendering of a frame, which will reduce the framerate or introduce choppiness. I always use the 2v2 cake building minigame from Mario Party to simplify what’s happening.

The cake represents a frame that is being constructed, and both the CPU (Mario) and GPU (Peach) need to put it together for it to be complete. Therefore, if one of them is slow/misses, the cake will take longer to complete, or the frame will take longer to render.

For Crysis, although much of the detailed graphical computation was done on the GPU, it’s important to note that executing the graphics instruction was done on the CPU. This was due to how DirectX 9 and 10 (the rendering logic that the engines used, specifically CryEngine 2) decided to carry out the instructions. Unfortunately, DirectX 9 and 10 executed these instructions in one thread, which meant at most one or two CPU cores were being used.

This, unfortunately, was not aligned with the direction that CPU technology was heading in. As a result, CPUs evolved to have more cores (with many high-end CPUs sporting eight cores) and rely less on increasing the clock speed of each core. This is the crux of the challenge that PCs face in running Crysis.

The ratio of the increase in resolution since 2007 (4M pixels —> 33M pixels) has not kept up with the clock speed of the cores (3 GHz —> 4.2 GHz), which means that running Crysis may get harder to run as time goes by. Alright, that’s not 100% accurate as many high-end machines can now run Crysis at 4K above 60FPS most of the time, but the point remains valid.

So back to the original question at hand: Can it run Crysis? Probably. With most modern hardware, you’ll get 60 frames per second most of the time. But will it run silky smooth as other games running on your 240 Hz monitor? Highly improbable, and it’ll be a pipe dream for all of us.

Tristan Jung is an engineering manager during the day and plays too many video games at night. You can follow him on Twitter at https://twitter.com/3_stan